Jsonb in postgres is fine, I’ve been using it for years. Much better than letting mongodb anywhere near the stack.

But then postgres is basically an OS at this point, enough to compete with emacs for meme potential. And I say that as a happy postgres user.

Wait until people learn about the possibility of putting a web server INSIDE of postgres :)

https://betterprogramming.pub/what-happens-if-you-put-http-server-inside-postgres-a1b259c2ce56

My principle dev asked if we could figure out how to invoke Lambda functions from within postgres trigger functions.

I was like, “Probably. But it’s like putting a diving board at the top of the Empire State building… doable, but a bad plan all around.”

Sounds like someone heard about containers through a bad game of telephone!

PostgreSQL can even run WebAssembly (with an extension)

Classically, a lot of RDBMSen are. MySQL held back for the most part, though it’s not necessarily better for it.

Postgres handles NoSQL better than many dedicated NoSQL database management systems. I kept telling another team to at least evaluate it for that purpose - but they knew better and now they are stuck with managing the MongoDB stack because they are the only ones that use it. Postgres is able to do everything they use out of the box. It just doesn’t sound as fancy and hip.

JSON data within a database is perfectly fine and has completely justified use cases. JSON is just a way to structure data. If it’s bespoke data or something that doesn’t need to be structured in a table, a JSON string can keep all that organized.

We use it for intake questionnaire data. It’s something that needs to be on file for record purposes, but it doesn’t need to be queried aside from simply being loaded with the rest of the record.

Edit: and just to add, even MS SQL/Azure SQL has the ability to both query and even index within a JSON object. Of course Postgres’ JSONB data type is far better suited for that.

While I understand your point, there’s a mistake that I see far too often in the industry. Using Relational DBs where the data model is better suited to other sorts of DBs. For example, JSON documents are better stored in document DBs like mongo. I realize that your use case doesn’t involve querying json - in which it can be simply stored as text. Similar mistakes are made for time series data, key-value data and directory type data.

I’m not particularly angry at such (ab)uses of RDB. But you’ll probably get better results with NoSQL DBs. Even in cases that involve multiple data models, you could combine multiple DB software to achieve the best results. Or even better, there are adaptors for RDBMS that make it behave like different types at the same time. For example, ferretdb makes it behave like mongodb, postgis for geographic db, etc.

Using Relational DBs where the data model is better suited to other sorts of DBs.

This is true if most or all of your data is such. But when you have only a few bits of data here and there, it’s still better to use the RDB.

For example, in a surveillance system (think Blue Iris, Zone Minder, or Shinobi) you want to use an RDB, but you’re going to have to store JSON data from alerts as well as other objects within the frame when alerts come in. Something like this:

{ "detection":{ "object":"person", "time":"2024-07-29 11:12:50.123", "camera":"LemmyCam", "coords": { "x":"23", "y":"100", "w":"50", "h":"75" } } }, "other_ojects":{ <repeat above format multipl times> } }While it’s possible to store this in a flat format in a table. The question is why would you want to. Postgres’ JSONB datatype will store the data as efficiently as anything else, while also making it queryable. This gives you the advantage of not having to rework the the table structure if you need to expand the type of data points used in the detection software.

It definitely isn’t a solution for most things, but it’s 100% valid to use.

There’s also the consideration that you just want to store JSON data as it’s generated by whatever source without translating it in any way. Just store the actual data in it’s “raw” form. This allows you to do that also.

Edit: just to add to the example JSON, the other advantage is that it allows a variable number of objects within the array without having to accommodate it in the table. I can’t count how many times I’ve seen tables with “extra1, extra2, extra3, extra4, …” because they knew there would be extra data at some point, but no idea what it would be.

deleted by creator

Both Oracle and Postgres have pretty good support for json in SQL.

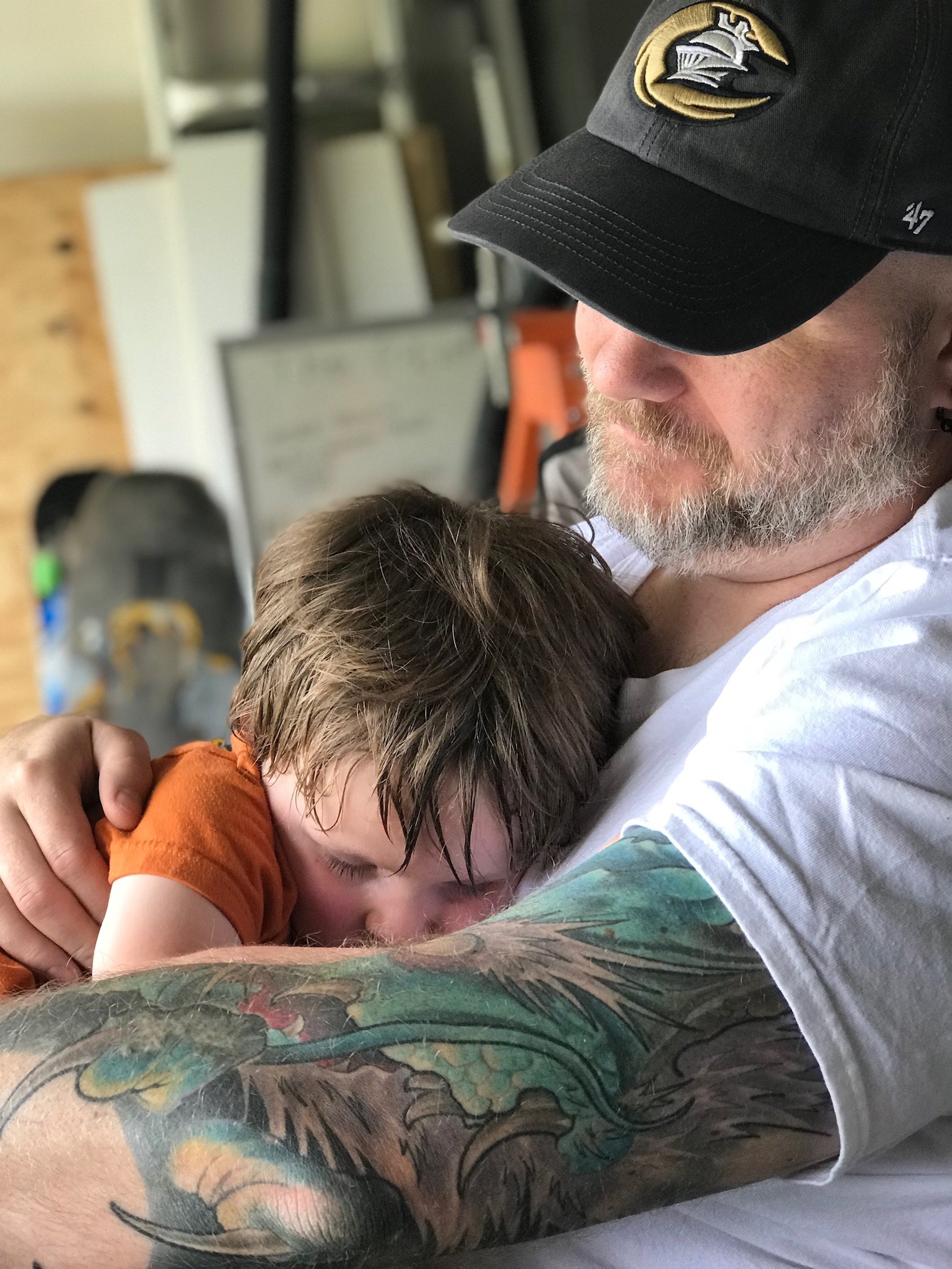

and the plane in the picture is perfectly capable of transporting a plane. What’s your point?

JSON in the DB isn’t an antipattern. It is frequently used in absolutely terrible designs but it is not itself a bad thing.

I’m a data architect and I approve this message.

Carrying the body of a smaller plane in a larger plane isn’t an antipattern either. Airbus does this between body assembly and attaching the wings.

I think plane people call it a fusilage, because they’re weird and like French.

It’s “fuselage”.

It’s called like that because of it came from the word “forme fuselé” (Tapered shape) and it’s a french word mainly because we created it in 1908.

You’re welcome :-)

Why not use nosql if your important data is stored in JSON? You can still do all your fancy little joins and whatnot.

Turn it inside out. Why not use a RDBMS with a NoSQL bit added on the side?

Specifically so you get mature transactional guarantees, indices and constraints that let app developers trust your db.

The alternative is not super exciting though. My experience with NoSQL has been pretty shit so far. Might change this year as the company I’m at has a perfect case for migrating to NoSQL but I’ve been waiting for over a year for things to move forward…

Also, I had a few cases where storing JSON was super appropriate : we had a form and we wanted to store the answers. It made no sense to create tables and shit, since the form itself could change over time! Having JSON was an elegant way to store the answers. Being able to actually query the JSON via Oracle SQL was like dark magic, and my instincts were all screaming at the obvious trap, but I was rather impressed by the ability.

as long as you have good practices like storing the form version and such.

SQL blows for hierarchical data though.

Want to fetch a page of posts AND their tags in normalized SQL? Either do a left join and repeat all the post values for every tag or do two round-trip queries and manually join them in code.

If you have the tags in a JSON blob on the post object, you just fetch and decide that.

I’m no expert in JSON, but don’t you lose the ability to filter it before your application receives it all? If you had a reasonable amount of data then in SQL you can add WHERE clause and cut down what you get back so you could end up processing a lot less data than in your JSON example, even with the duplicated top table data. Plus if you’re sensible you can ensure you’re not bringing back more fields than you need.

It’s entirely possible to sort and filter inside JSON data in most SQL dialects. You can even add indexes.

If there’s commonly used data that would be good for indexing or filtering, you can take a few key values and keep them stored in their own fields.

There are also often functions that can parse structured text like XML or JSON so you can store data in blobs but not actually need to query all the blobs out to a client to use them on the database side and retrieve specific values. Another nice thing about blobs is the data can be somewhat flexible in structure. If i need to add a field to something that is a key/value pair inside a blob, i dont necessarily have to change a bunch of table schemas to get the functionality on the front end that I’m after. Just add a few keys inside the blob.

In a traditional SQL database, yeah. In various document-oriented (NoSQL) databases, though, you can do that.

Modern relational databases have support for it too including indexes etc. For example postgres.

Every major SQL database supports json manipulation nowadays. I know MariaDB and MySQL and SQLite at least support it natively.

deleted by creator

If you only join on indexed columns and filter it down to a reasonable number of results it’s easily fast enough.

For true hierarchical structures there’s tricks. Like using an extra Path table, which consists of AncestorId, DescendentId and NumLevel.

If you have this structure:

A -> B -> C

Then you have:

A, A, 0

A, B, 1

A, C, 2

B, B, 0

B, C, 1

C, C, 0

That way you can easily find out all children below a node without any joins in simple queries.

The fact that you’d need to keep this structure in SQL and make sure it’s consistent and updated kinda proves my point.

It’s also not really relevant to my example, which involves a single level parent-child relationship of completely different models (posts and tags).

I mean in my case it’s for an international company where customers use this structure and the depth can basically be limitless. So trying to find the topmost parent of a child or getting all children and their children anywhere inside this structure becomes a performance bottleneck.

If you have a single level I really don’t understand the problem. SQL joins aren’t slow at all (as long as you don’t do anything stupid, or you start joining a table with a billion entries with another table with a billion entries without filtering it down to a smaller data subset).

My point is that SQL works with and returns data as a flat table, which is ill fitting for most websites, which involve many parent-child object relationships. It requires extra queries to fetch one-to-many relationships and postprocessing of the result set to match the parents to the children.

I’m just sad that in the decades that SQL has been around, there hasn’t been anything else to replace it. Most NoSQL databases throw out the good (ACID, transactions, indexes) with the bad.

I really don’t see the issue there, you’re only outputting highly specific data to a website, not dumping half the database.

Do you mean your typical CRUD structure? Like having a User object (AuthId, email, name, phone, …), the user has a Location (Country, zip, street, house number, …), possibly Roles or Permissions, related data and so on?

SQL handles those like a breeze and doesn’t care at all about having to resolve the User object to half a dozen other tables (it’s just a 1…1 relation, on 1…n, but with a foreign key on the user id it’s all indexed anyway). You also don’t just grab all this data, join it and throw it to the website (or rather the enduser API), you map the data to objects again (JSON in the end).

What does it matter there if you fetched the data from a NoSQL document or from a relational database?

The only thing SQL is not good at is if you have constantly changing fields. Then JSON in SQL or NoSQL makes more sense as you work with documents. For example if you offer the option to create user forms and save form entries. The rigid structure of SQL wouldn’t work for a dynamic use-case like that.

Either do a left join and repeat all the post values for every tag or do two round-trip queries and manually join them in code.

JSON_ARRAYAGG. You’ll get the object all tidied up by database in one trip with no need to manipulate on the receiving client.I recently tried MariaDB for a project and it was kinda neat, having only really messed with DynamoDB and 2012 era MsSQL. All the modern SQL languages support it, though MariaDB and MySQL don’t exactly follow the spec.

Document databases are just a big text field with additional index and metadata fields anyway.

He told the truth but people hated him

It’s normal to denormalize data in a relational database. Having a lot of joins can be expensive and non-performant. So it makes sense to use a common structure like JSON for storing the demoralized data. It’s concise, and still human readable and human writable.

Why should I spin up a NoSQL solution when 99% of my data is relational?

As a data engineer, I focus on moralizing my data, reforming it so it is ready to rejoin society

Having a lot of joins can be expensive and non-performant.

Only if you don’t know how to do indexing properly. Normalized data is more performant (less duplication of data, less memory and bandwidth is being used) if you know how to index.

It may have been true decades ago that denormalized tables were more performant, I don’t know. But today it’s far more common that the phrase “denormalized tables are more performant” is something that’s said by someone that sucks at indexing and/or is just being lazy.

But I do put JSON into tables sometimes when the data is going to be very inconsistent between different items and there’s no need to index any of the values in there. Like if different vendors provide different kinds of information about their products, I need to store it somewhere, so just serialize it and put it in there to be read by a program that has abstraction layers to deal with it. It’s never going to perform well if I do a query on it, but if all that’s needed is to display details on one item at a time, it’s fine.

I am currently trying to get deeper into database topics, could you maybe point me somewhere I can read up on that topic a bit more?

RIP Antonov :(

I think this is one of the smaller ones, mryia had a twin tail. this one doesnt.

This one is probs still flying

There are valid reasons to do this, of course. But yeah it fits the image.

At least it’s not XML.

pretty much all my side projects

Couchbase db is just sqlite with json. Anyhow - on a more humorous note it reminded me at this intially:

Don’t talk to me and my Directed Hypergraph Databases again